AWS Storage:

Cloud storage is a cloud computing model that enables storing data and files on the internet through a cloud computing provider that you access either through the public internet or a dedicated private network connection. The provider securely stores, manages, and maintains the storage servers, infrastructure, and network to ensure you have access to the data when you need it at virtually unlimited scale, and with elastic capacity.

IMPORTANCE OF AWS Storage:

-> Cost Effectiveness: With cloud storage, there is no hardware to purchase, no storage to provision, and no extra capital being used for business spikes. You can add or remove storage capacity on demand, quickly change performance and retention characteristics, and only pay for storage that you actually use.

-> agility: With cloud storage, resources are only a click away. You reduce the time to make those resources available to your organization from weeks to just minutes.

->Faster deployment: Cloud storage services allow IT to quickly deliver the exact amount of storage needed, whenever and wherever it's needed.

-> Scaliblity: Cloud storage delivers virtually unlimited storage capacity. Users can access storage from anywhere, at any time, without worrying about complex storage allocation processes, or waiting for new hardware.

->Business Continuity: Cloud storage providers store your data in highly secure data centers, protecting your data and ensuring business continuity. Cloud storage services are designed to handle concurrent device failure by quickly detecting and repairing any lost redundancy.

Types of storage:

three main types of cloud storage:

1) Object storage: Object storage is a data storage architecture for large stores of unstructured data. Objects store data in the format it arrives in and makes it possible to customize metadata in ways that make the data easier to access and analyze. Instead of being organized in files or folder hierarchies, objects are kept in secure buckets that deliver virtually unlimited scalability. It is also less costly to store large data volumes.

2) File storage: File storage, also called file-level or file-based storage, is exactly what you think it might be: Data is stored as a single piece of information inside a folder, just like you’d organize pieces of paper inside a manila folder. When you need to access that piece of data, your computer needs to know the path to find it. File-based storage or file storage is widely used among applications and stores data in a hierarchical folder and file format. This type of storage is often known as a network-attached storage (NAS) server with common file level protocols of Server Message Block (SMB) used in Windows instances and Network File System -(NFS) found in Linux.

3) Block Storage: Enterprise applications like databases or enterprise resource planning (ERP) systems often require dedicated, low-latency storage for each host. This is analogous to direct-attached storage (DAS) or a storage area network (SAN). In this case, you can use a cloud storage service that stores data in the form of blocks. Each block has its own unique identifier for quick storage and retrieval.

AWS STORAGE SERVICES:

1) Simple Storage Sservice (S3):

It can store data for any business such as web applications, mobile applications backup, archive, analytics. it gives easy access to control management for all your specific requirements. it also allows a simple webbased file explorer to upload files create folders or delete them.

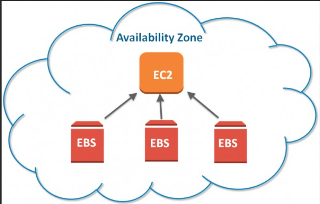

EBS provides storage which is similar to hard drives to store any kind of data. This can be attached to any EC2 instance and used as block storage which allows to install any operating system. EBS provide absolute low latency performance and scale up or down resources. It is available inn both SSD and HDD formats depending on requirements.

Features of EBS:

-> Scalability: EBS volume sizes and features can be scaled as per the needs of the system. This can be done in two ways:

.Take a snapshot of the volume and create a new volume using the Snapshot with new updated features.

. Updating the existing EBS volume from the console.

-> Backup: Users can create snapshots of EBS volumes that act as backups.

. Snapshot can be created manually at any point in time or can be scheduled.

. Snapshots are stored on AWS S3 and are charged according to the S3 storage charges.

. Snapshots are incremental in nature.

. New volumes across regions can be created from snapshots.

-> Encryption: Encryption can be a basic requirement when it comes to storage. This can be due to the government of regulatory compliance. EBS offers an AWS managed encryption feature.

. Users can enable encryption when creating EBS volumes bu clicking on a checkbox.

. Encryption Keys are managed by the Key Management Service (KMS) provided by AWS.

. Encrypted volumes can only be attached to selected instance types.

. Encryption uses the AES-256 algorithm.

. Snapshots from encrypted volumes are encrypted and similarly, volumes created from snapshots are encrypted.

->Charges: Unlike AWS S3, where you are charged for the storage you consume, AWS charges users for the storage you hold. For example if you use 1 GB storage in a 5 GB volume, you’d still be charged for a 5 GB EBS volume.

-> EBS charges vary from region to region.

-> EBS Volumes are independent of the EC@ they are attached to. The data in an EBS volume will remain unchanged even if the instance is rebooted or terminated.

-> Single EBS volume can only be attached to one EC2 instance at a time. However, one EC2 can have more than one EBS volumes attached to it.

Types of EBS Volumes:

a) SSD: This storage type is suitable for small chunks of data that requires fast I/Ops. SSDs can be used as root volumes for EC2 instances.

->General Purpose SSD (GP2): Offers a single-digit millisecond latency.Can provide 3000 IOps burst. IOps speed is limited from 3-10000 IOps. The throughput of these volumes is 128MBPS up to 170GB. After which throughput increases 768KBPS per GB and peaks at 160MBPS.

-> Provisioned IOPS SSD (IO1): These SSDs are IO intensive.

Users can specify IOPS requirement during creation.

Size limit is 4TB-16TB. According to AWS claims “These volumes, if attached to EBS optimized instances will deliver IOPS defined within 10% 99.9% times of the year”

Max IOPS speed is 20000.

b) HDD: This storage type is suitable for Big Data chunks and slower processing. These volumes cannot be used as root volumes for EC2. AWS claims that “These volumes provide expected throughput 99.9% times of the year”

->Cold HDD (SC1)

SC1 is the cheapest of all EBS volume types. It is suitable for large, infrequently accessed data. Max Burst speed offered is 250 Mbps

-> Throughput optimized HDD (ST): Suitable for large, frequently accessed data. Burst speed ranges from 250 MBPS to 500 MBPS.

Drawbacks:

- EBS is not recommended as temporary storage.

- They cannot be used as a multi-instance accessed storage as they cannot be shared between instances.

The durability offered by services like AWS S3 and AWS EFS is greater

3) Elastic File system (EFS):

EFS is a managed network file system that is easy to set up right from the amazon console. EFS helps in provides access to same file when multiple EC2 files are there.EFS scales uon the size of the files stored an is also accessible from multiple avalilablity zones

Features of EFS:Storage capacity: Theoretically EFS provides an infinite amount of storage capacity. This capacity grows and shrinks as required by the user.

Fully Managed: Being an AWS managed service, EFS takes the overhead of creating, managing, and maintaining file servers and storage.

Multi EC-2 Connectivity: EFS can be shared between any number of EC-2 instances by using mount targets.

Note-: A mount target is an Access point for AWS EFS that is further attached to EC2 instances, allowing then access to the EFS.

4.Availability: AWS EFS is region specific., however can be present in multiple availability zones in a single region. EC-2 instances across different availability zones can connect to EFS in that zone for a quicker access.

5.EFS LifeCycle Management: Lifecycle management moved files between storage classes. Users can select a retention period parameter (in number of days). Any file in standard storage which is not accessed for this time period is moved to Infrequently accessed class for cost-saving.

-> Note that the retention period of the file in standard storage resets each time the file is accessed

->Files once accessed in the IA EFS class are them moved to Standard storage.

->Note that file metadata and files under 128KB cannot be transferred to the IA storage class.

->LifeCycle management can be turned on and off as deemed fit by the users.

6.Durability: Multi availability zone presence accounts for the high durability of the Elastic File System.

7.Transfer: Data can be transferred from on-premise to the EFS in the cloud using AWS Data Sync Service. Data Sync can also be used to transfer data between multiple EFS across regions.Multiple server architectures: In AWS only EFS provides a shared file system. So all the applications that require multiple servers to share one single file system have to use EFS.

9.Big Data Analytics: Virtually infinite capacity and extremely high throughput makes EFS highly suitable for storing files for Big data analysis.

10.Reliable data file storage: EBS data is stored redundantly in a single Availability Zone however EFS data is stored redundantly in multiple Availability Zones. Making it more robust and reliable than EBS.

11.Media Processing: High capacity and high throughput make EFS highly favorable for processing big media files.

4) Amazon FSx : Amazon fully managed native service is file server service. AWS takes care of managing the hardware in terms of setting up the hardware and the servers and the volumes and AWS also manages the software in terms of setting up the servers and also patching and maintaining the servers themselves and backing them up. The AWS FSx helps us to launch and run high-performance file systems with just a few clicks while avoiding tasks like provisioning hardware, configuring software or taking backups. Amazon FSx helps you to grasp the rich feature sets and fast performance of the most commonly used open-source and expensive file systems.

Features of Amazon FSx:

1) Simple and fully managed: Helps in fast and easy launching of the fully managed, highly reliable file system.

2) Highly available and durable: Amazon FSx provides a variety of deployment options to match your workload’s availability and durability requirement.

3) Secure and complaint: Amazon FSx automatically encrypts your data-at-rest and in-transit. In order to control network access to user file system, It allows user to run their file systems in an Amazon Virtual Private Cloud (Amazon VPC).

4) Fast delivery: Amazon FSx file systems are designed to deliver fast, predictable, scalable, and consistent performance. It provides you with high read and write speed and consistent low latency data access. It makes you choose the storage type and increase storage capacity at any time according to your needs.

5) Pay only for used resources: It offers a wide range of SSD and HDD storage options which in turn helps you to optimize storage price and performance for your workload. Here data compression helps to reduce storage consumption of user file system storage and user file system backups.

6) Easy integration with other AWS services: In order to easily develop, deploy, and run your workloads FSx can be integrated with other AWS services such as S3, CloudWatch, Cloud Tail, AWS KMS etc. Amazon FSx file systems can be integrated with other AWS services, such as Amazon S3, Amazon CloudWatch, Amazon CloudTrail, AWS KMS, Amazon SageMaker, Amazon WorkSpaces, Amazon AppStream 2.0, Amazon Elastic Container Service (Amazon ECS), Amazon Elastic Kubernetes Service (Amazon EKS), AWS Batch, and AWS Parallel Cluster.

5) Amazon S3 Glacier: is the backup and archival storage provided by AWS. It is an extremely low cost, long term, durable, secure storage service that is ideal for backups and archival needs. In a lot of its operation AWS Glacier is similar to S3, and it interacts directly with S3, using S3-lifecycle policies.

6) AWS storage gateway: it is a simple gateway to let on premise applications store, access or archieve the data into the AWS cloud. This is achieved by running on a hypervisor on one of the machines in your data center which contains the storage gateway and then is available on AWS to connect to S3 glacier or EBS. It provides highly optimized, network resilent and low cost way to move date forn to the cloud. Storage gateway also supports legacy backup stores such as tapes as virtual tapes backed up directly AWS Glacier.

Top comments (0)