What is Deep learning ?

What is Artificial intelligence

What is Machine Learning

Applications of Deep Learning

Importance of Deep Learning

Popular Libraries for Deep Learning

Difference between Deep learning(Unstructured data) and Machine learning(structured data)

What is Deep learning

Deep learning is a subfield of machine learning that deals with algorithms inspired by the structure and function of the brain.

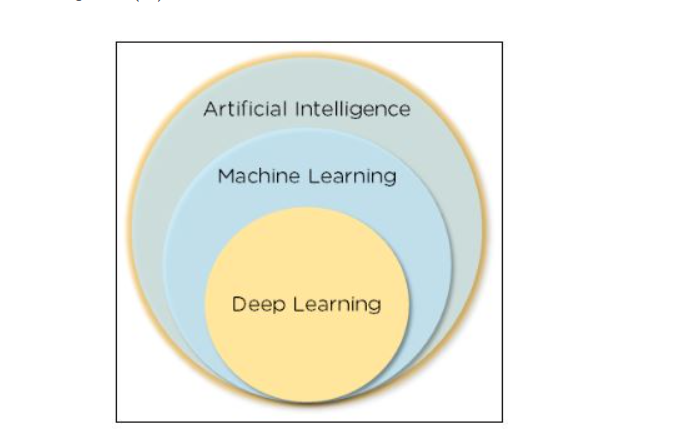

Deep learning is a subset of machine learning, which is a part of artificial intelligence (AI)

Example

simple scenario of analyzing an image. Let us assume that your input image is divided up into a rectangular grid of pixels. Now, the first layer abstracts the pixels. The second layer understands the edges in the image. The Next layer constructs nodes from the edges. Then, the next would find branches from the nodes. Finally, the output layer will detect the full object. Here, the feature extraction process goes from the output of one layer into the input of the next subsequent layer.

deep means multiple hidden layer

Deep learning is an application of machine learning that uses complex algorithms and deep neural nets to train a model.

What is Artificial intelligence

Artificial intelligence is the ability of a machine to imitate intelligent human behavior.

What is Machine Learning

Machine learning allows a system to learn and improve from experience automatically

Applications of Deep Learning

Deep learning is widely used to make weather predictions about rain, earthquakes, and tsunamis. It helps in taking the necessary precautions. With deep learning, machines can comprehend speech and provide the required output. It enables the machines to recognize people and objects in the images fed to it. Deep learning models also help advertisers leverage data to perform real-time bidding and targeted display advertising. In the next section introduction to deep learning tutorial, we will cover the need and importance of deep learning.

Image Recognition and Classification:

Example: ImageNet Large Scale Visual Recognition Challenge, where deep learning models like Convolutional Neural Networks (CNNs) are used to classify objects in images.

Object Detection:

Example: Autonomous vehicles use deep learning to detect and classify objects on the road, such as pedestrians, cars, and traffic signs.

Natural Language Processing (NLP):

Example: Sentiment analysis, chatbots, and machine translation are NLP applications that utilize deep learning models like Recurrent Neural Networks (RNNs) and Transformers.

Speech Recognition:

Example: Voice assistants like Siri and Google Assistant employ deep learning models to convert spoken language into text and perform tasks.

Recommendation Systems:

Example: Netflix and Amazon use deep learning for personalized content recommendations to improve user engagement.

Anomaly Detection:

Example: In cybersecurity, deep learning can identify unusual network behavior that may indicate a security breach.

Healthcare:

Example: Deep learning models are used for medical image analysis, such as detecting tumors in medical scans or predicting disease outcomes.

Autonomous Vehicles:

Example: Self-driving cars rely on deep learning for perception tasks, such as recognizing road signs, pedestrians, and lane markings.

Generative Models:

Example: Generative Adversarial Networks (GANs) can be used to create realistic images, videos, and other content, as well as deepfakes.

Reinforcement Learning:

Example: Deep reinforcement learning is used in game playing (e.g., AlphaGo), robotics, and optimizing real-world systems like traffic management.

Time Series Forecasting:

Example: Deep learning models, including Recurrent Neural Networks (RNNs) and Long Short-Term Memory (LSTM) networks, are used for financial market predictions, weather forecasting, and demand forecasting.

Fraud Detection:

Example: Banks and credit card companies employ deep learning for detecting fraudulent transactions based on patterns in transaction data.

Language Generation:

Example: OpenAI's GPT models can generate coherent and context-aware text, which has applications in content generation, creative writing, and more.

Game Playing:

Example: Deep reinforcement learning has been applied to play games like chess, Go, and video games, achieving superhuman performance.

Robotics:

Example: Deep learning is used in robot control and vision systems to improve tasks like object manipulation, navigation, and perception.

Environmental Monitoring:

Example: Deep learning can be applied to analyze satellite imagery for tasks like deforestation detection, crop health assessment, and climate modeling.

Retail and Inventory Management:

Example: Retailers use deep learning for inventory optimization, demand forecasting, and dynamic pricing strategies.

Drug Discovery:

Example: Deep learning models are employed in drug design and discovery, speeding up the process of identifying potential new pharmaceutical compounds.

Energy Consumption Optimization:

Example: Deep learning can be used to optimize energy consumption in buildings and industrial processes by predicting energy usage patterns.

Quality Control and Manufacturing:

Example: Deep learning models are applied to automate quality control in manufacturing processes by identifying defects in products.

These are just a few examples of the many applications of deep learning across different domains. Deep learning's flexibility and ability to handle complex data make it a powerful tool for solving a wide range of problems.

Importance of Deep Learning

Machine learning works only with sets of structured and semi-structured data, while deep learning works with both structured and unstructured data

Deep learning algorithms can perform complex operations efficiently, while machine learning algorithms cannot

Machine learning algorithms use labeled sample data to extract patterns, while deep learning accepts large volumes of data as input and analyzes the input data to extract features out of an object

The performance of machine learning algorithms decreases as the number of data increases; so to maintain the performance of the model, we need a deep learning

Pattern Recognition: Deep learning excels in recognizing complex patterns and structures within data, making it valuable for tasks like image and speech recognition.

Automation: Deep learning can automate tasks that were previously time-consuming and labor-intensive, improving efficiency and productivity.

Big Data Processing: It enables the handling and analysis of large datasets, extracting meaningful insights and knowledge from vast amounts of information.

Personalization: Deep learning allows for personalized recommendations and experiences in fields such as e-commerce, entertainment, and content delivery.

Medical Advancements: It aids in the early detection of diseases, medical image analysis, drug discovery, and personalized medicine, improving healthcare outcomes.

Natural Language Understanding: Deep learning has revolutionized NLP, enabling chatbots, language translation, and sentiment analysis, among other applications.

Improved Image and Video Analysis: Deep learning powers technologies like facial recognition, object detection, and video content understanding, enhancing security and media analysis.

Financial Modeling: Deep learning models can make predictions and risk assessments, aiding in financial trading, fraud detection, and investment strategies.

Autonomous Systems: Deep learning plays a crucial role in the development of autonomous vehicles, drones, and robots by enabling perception and decision-making.

Enhanced User Experience: It leads to better user experiences through voice assistants, recommendation systems, and interactive applications.

Climate Modeling: Deep learning is used for analyzing environmental data, understanding climate patterns, and developing predictive models for climate change.

Complex Problem Solving: It can tackle complex problems that involve numerous variables and dependencies, making it useful in a variety of scientific and engineering disciplines.

Innovation in Art and Creativity: Deep learning has been used to generate art, music, and literature, pushing the boundaries of human creativity.

Improved Accuracy and Generalization: Deep learning models have shown remarkable generalization capabilities, leading to high accuracy in various tasks.

Flexible Architecture: Deep learning models can be adapted and fine-tuned for a wide range of applications, providing a versatile tool for researchers and developers.

Real-time Processing: Deep learning models can process data in real-time, allowing for applications that require quick decisions and responses.

Scientific Discoveries: Deep learning aids in scientific research by processing and analyzing data in fields like particle physics, genomics, and materials science.

Economic Impact: Deep learning has the potential to create new industries, job opportunities, and economic growth through innovation and technology advancements.

Human-AI Collaboration: It can enhance human capabilities, enabling collaboration between humans and AI systems in decision-making and problem-solving.

Global Accessibility: With open-source libraries and resources, deep learning is becoming more accessible to researchers, developers, and organizations worldwide.

Popular Libraries for Deep Learning

mostly used framework is keras

Deep learning is a subfield of machine learning that focuses on neural networks with many layers (deep neural networks). There are several popular libraries for deep learning, and each has its own strengths and use cases. Here are explanations and examples for some of the most popular deep learning libraries:

TensorFlow:

TensorFlow is an open-source deep learning framework developed by Google. It is known for its flexibility and scalability.

Example:

import tensorflow as tf

# Define a simple neural network in TensorFlow

model = tf.keras.Sequential([

tf.keras.layers.Dense(64, activation='relu', input_shape=(784,)),

tf.keras.layers.Dense(10, activation='softmax')

])

Keras:

Keras is a high-level deep learning API that can run on top of various deep learning frameworks, including TensorFlow and Theano. It is known for its user-friendly and simple API.

Example:

from tensorflow import keras

# Build a neural network using Keras

model = keras.Sequential([

keras.layers.Dense(64, activation='relu', input_shape=(784,)),

keras.layers.Dense(10, activation='softmax')

])

PyTorch:

PyTorch is an open-source deep learning framework developed by Facebook's AI Research lab. It is known for its dynamic computation graph, which makes it popular among researchers.

Example:

import torch

import torch.nn as nn

# Define a simple neural network in PyTorch

class SimpleNN(nn.Module):

def __init__(self):

super(SimpleNN, self).__init()

self.fc1 = nn.Linear(784, 64)

self.fc2 = nn.Linear(64, 10)

def forward(self, x):

x = torch.relu(self.fc1(x))

x = torch.softmax(self.fc2(x), dim=1)

return x

MXNet:

Apache MXNet is an open-source deep learning framework designed for both efficiency and flexibility. It is known for its support of multiple programming languages and its strong support for GPU acceleration.

Example:

import mxnet as mx

from mxnet import gluon, autograd

# Define a simple neural network in MXNet

net = gluon.nn.Sequential()

with net.name_scope():

net.add(gluon.nn.Dense(64, activation='relu'))

net.add(gluon.nn.Dense(10))

Caffe:

Caffe is a deep learning framework developed by the Berkeley Vision and Learning Center (BVLC). It is known for its speed and efficiency, especially in applications like image recognition.

Example:

Caffe model definitions are typically written in a .prototxt file, which specifies the network architecture, and trained models are stored in a .caffemodel file.

These are some of the popular deep learning libraries, and each has its own unique features and community support. The choice of library often depends on your specific needs, preferences, and the type of deep learning projects you are working on.

Difference between Deep learning -Unstructured data and Machine learning-structured data

The choice between traditional machine learning (ML) and deep learning (DL) depends on various factors, including the nature of the problem, the size and complexity of the dataset, the availability of computational resources, and the specific requirements of the task at hand.

Here's a general comparison of when each approach is commonly used:

Traditional Machine Learning:

Structured Data: Traditional ML algorithms, such as linear regression, decision trees, random forests, support vector machines (SVM), and gradient boosting machines (GBM), are well-suited for structured data with a relatively small number of features.

Interpretability: Traditional ML models are often easier to interpret and understand compared to deep learning models. This is particularly important in domains where interpretability is crucial, such as healthcare and finance.

Resource Efficiency: Traditional ML models typically require fewer computational resources for training and inference compared to deep learning models, making them more suitable for deployment in resource-constrained environments.

Deep Learning

Unstructured Data: Deep learning excels at tasks involving unstructured data, such as images, audio, text, and sequences. Convolutional neural networks (CNNs) are commonly used for image and video processing, recurrent neural networks (RNNs) for sequential data like text and time series, and transformers for natural language processing (NLP).

Feature Learning: Deep learning models are capable of automatically learning features from raw data, reducing the need for manual feature engineering. This is particularly advantageous when working with high-dimensional data or data with complex patterns.

State-of-the-Art Performance: Deep learning models have achieved state-of-the-art performance in various domains, including computer vision, natural language understanding, and speech recognition. They have demonstrated superior performance on tasks like image classification, object detection, machine translation, and speech synthesis.

Large-Scale Data: Deep learning models often require large amounts of data for training to generalize well. With the availability of big data and powerful computational resources (e.g., GPUs and TPUs), deep learning has become increasingly feasible for a wide range of applications.

In summary, traditional machine learning is still widely used for many tasks, especially when dealing with structured data and when interpretability is important. Deep learning, on the other hand, is more commonly used for tasks involving unstructured data and has achieved remarkable success in domains where it can leverage large amounts of data and computational power. Ultimately, the choice between ML and DL depends on the specific requirements and constraints of the problem, as well as the available resources and expertise.

Top comments (0)