In deep learning, especially in tasks like classification, it's common to represent labels using one-hot encoding. One-hot encoding is a way to represent categorical data, where each category is represented as a binary vector, and only one bit is "hot" (1) to indicate the category. This is particularly useful when dealing with categorical labels or classes.

Here's a step-by-step explanation of how labels are allowed in one-hot encoded format in deep learning:

Class Labels:

Assume you have a set of class labels for your task. For example, if you have three classes: "Cat," "Dog," and "Bird."

Indexing:

Assign a unique index to each class. For instance:

"Cat" might be assigned index 0.

"Dog" might be assigned index 1.

"Bird" might be assigned index 2.

One-Hot Encoding:

Represent each class label as a binary vector of length equal to the number of classes.

Set the element corresponding to the index of the true class to 1, and all other elements to 0.

Example:

"Cat" would be represented as [1, 0, 0].

"Dog" would be represented as [0, 1, 0].

"Bird" would be represented as [0, 0, 1].

TensorFlow Example:

In TensorFlow, you can use the tf.one_hot function to convert class indices to one-hot encoded vectors. Here's an example:

import tensorflow as tf

# Example class indices

class_indices = [0, 1, 2]

# Number of classes

num_classes = len(class_indices)

# Convert to one-hot encoded format

one_hot_labels = tf.one_hot(class_indices, depth=num_classes)

In this example, one_hot_labels would be a TensorFlow tensor representing the one-hot encoded labels.

When training a neural network in TensorFlow, you would typically use this one-hot encoded format for the labels in your training data. For example, if you have an image classification task with three classes, your labels might look like:

labels = [[1, 0, 0], # Cat

[0, 1, 0], # Dog

[0, 0, 1]] # Bird

Using one-hot encoded labels is beneficial in classification tasks, especially when working with categorical crossentropy loss functions, as it allows the model to predict class probabilities and learn to distinguish between classes effectively.

Full Code

import tensorflow as tf

# Example class indices

class_indices = [0, 1, 2]

# Number of classes

num_classes = len(class_indices)

# Convert to one-hot encoded format

one_hot_labels = tf.one_hot(class_indices, depth=num_classes)

# Start a TensorFlow session to evaluate the result

with tf.Session() as sess:

result = sess.run(one_hot_labels)

# Print the result

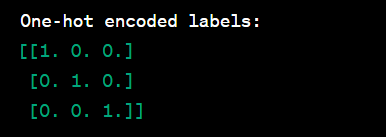

print("One-hot encoded labels:")

print(result)

Each row in the result represents a one-hot encoded vector corresponding to a class index. The element at the index of the true class is set to 1, and all other elements are set to 0.

When working with deep learning models in TensorFlow, you would typically use this one-hot encoded format for the labels in your training data. For example, in a classification task with three classes, your labels might look like:

labels = [[1, 0, 0], # Class 0

[0, 1, 0], # Class 1

[0, 0, 1]] # Class 2

Full code

import tensorflow as tf

# Example one-hot encoded labels

one_hot_labels = tf.constant([[1.0, 0.0, 0.0], # Class 0

[0.0, 1.0, 0.0], # Class 1

[0.0, 0.0, 1.0]]) # Class 2

# Build a simple model (example architecture)

model = tf.keras.Sequential([

tf.keras.layers.Dense(units=64, activation='relu', input_shape=(input_size,)),

tf.keras.layers.Dense(units=num_classes, activation='softmax') # Output layer with softmax activation for multi-class classification

])

# Compile the model

model.compile(optimizer='adam', loss='categorical_crossentropy', metrics=['accuracy'])

# Train the model with one-hot encoded labels

model.fit(x_train, one_hot_labels, epochs=num_epochs, batch_size=batch_size)

From dataset

from future_ import print_function

Use code with caution. Learn more

This line imports the print_function from the future module. This is necessary to ensure that the print() function works correctly in Python 3.

Import MNIST data

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets("/tmp/data/", one_hot=True)

Use code with caution. Learn more

These lines import the read_data_sets() function from the tensorflow.examples.tutorials.mnist library. This function reads the MNIST dataset from the specified directory and returns a mnist.DataSet object. The one_hot argument specifies whether the labels in the dataset should be encoded in one-hot encoding format.

import tensorflow as tf

Use code with caution. Learn more

This line imports the TensorFlow library. TensorFlow is a popular machine learning library that can be used for a variety of tasks, including image processing.

Extract images from dataset

images = mnist.train.images

Use code with caution. Learn more

This line extracts the images from the MNIST dataset. The images variable is a NumPy array containing the images.

Save images to disk

for i in range(len(images)):

image = images[i].reshape((28, 28))

tf.keras.preprocessing.image.save_img(f"/tmp/mnist_images/{i}.png", image)

Use code with caution. Learn more

This loop iterates over the images in the images array and saves them to disk in PNG format. The reshape() method is used to reshape the images to a size of 28x28, which is the size of the MNIST images. The save_img() function from the TensorFlow library is used to save the images to disk.

Top comments (0)