What is a Data Lake?

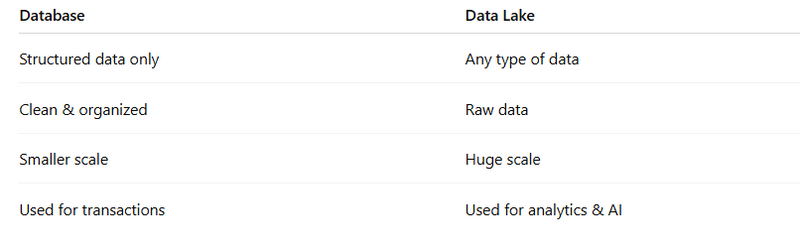

Data Lake vs Database

What is Databricks?

Example with Data Lake + Databricks

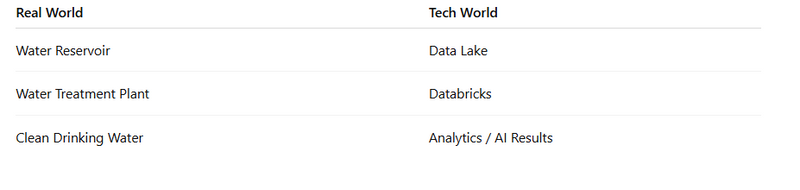

Real-World Analogy

Why Not Just Use Database?

Databricks Extra Power

Final Simple Definition

Objective and MCQ

What is a Data Lake?

Simple Meaning:

A Data Lake is a large storage system where you store all types of data in raw form.

Think of it like:

🏞️ A real lake — where water from rivers, rain, streams all flow into one big place.

Same way:

App data

Logs

Images

Videos

CSV files

JSON files

Database exports

All go into one central storage.

🧱 Key Features of a Data Lake

Stores structured data (tables, Excel, CSV)

Stores semi-structured data (JSON, XML)

Stores unstructured data (images, audio, video)

Data is stored in raw format

Very scalable (petabytes of data)

🏢 Example

Let’s say you run MyHospitalNow:

You collect:

Patient records

Doctor activity logs

Payment transactions

App click events

Feedback forms

Images & documents

Instead of storing everything in different systems, you dump all into a Data Lake.

Later:

Data scientist can analyze trends

You can build AI models

You can generate reports

Data Lake vs Database

What is Databricks?

Now comes the second part.

Simple Meaning:

Databricks is a platform that helps you:

Analyze, process, clean, and build AI models from data stored in a Data Lake.

If Data Lake is storage,

Databricks is the processing & intelligence engine.

🧠 What Databricks Actually Does

Databricks is built on Apache Spark.

It helps you:

Process big data fast

Clean messy raw data

Run analytics

Build machine learning models

Create dashboards

Manage Data Lake properly

🏗

Example with Data Lake + Databricks

Let’s continue MyHospitalNow example:

Step 1:

All raw data stored in:

AWS S3

Azure Data Lake

Google Cloud Storage

(This is your Data Lake)

Step 2:

You use Databricks to:

Clean duplicate patient records

Analyze doctor performance

Predict patient revisit rate

Build AI model for disease prediction

Generate revenue dashboards

Databricks reads from Data Lake → processes data → gives insights.

Real-World Analogy

Why Not Just Use Database?

Because:

Database cannot handle petabytes easily

AI models need raw historical data

Logs and media files cannot fit into normal SQL

🧠

Databricks Extra Power

Databricks also provides:

Delta Lake (improved version of Data Lake with ACID transactions)

Notebooks for collaboration

Auto-scaling clusters

Real-time streaming processing

AI & ML tools built-in

🎯

Final Simple Definition

✅ Data Lake:

A central place to store all raw data at large scale.

✅ Databricks:

A platform to process, analyze, and build AI models from Data Lake data.

What is a Data Lake?

A) A relational database

B) A type of data warehouse

C) A centralized storage for raw and varied data

D) A business reporting tool

Answer: C

2.

Which of the following is NOT typically stored in a Data Lake?

A) Images

B) Audio files

C) Only structured tables

D) JSON logs

Answer: C

3.

What type of data format is a Data Lake designed to handle?

A) Structured only

B) Unstructured only

C) Both structured and unstructured

D) Numeric only

Answer: C

4.

Which of these is a common use case for Databricks?

A) Transaction processing

B) In-memory analytics and ML

C) Basic file storage

D) Frontend rendering

Answer: B

5.

Databricks is built on top of which processing engine?

A) Hadoop

B) Spark

C) SQL Server

D) Oracle

Answer: B

6.

Which layer in a Data Lake is typically used for cleaned and conformed data?

A) Raw layer

B) Bronze layer

C) Silver layer

D) Landing zone

Answer: C

7.

Delta Lake is used to provide what kind of capabilities?

A) Low latency only

B) ACID transactions and scalable storage

C) Frontend UI

D) Only BI reporting

Answer: B

8.

The Gold layer in a data lake architecture is used for:

A) Raw data ingestion

B) Analytics and reporting ready tables

C) Storing images

D) Temporary caches

Answer: B

9.

Which of the following is an ingestion pattern used in Data Lakes?

A) ELT only

B) ETL only

C) Both ETL and ELT

D) SQL joins only

Answer: C

10.

Which tool is commonly used for orchestrating ETL jobs?

A) GitHub

B) Airflow

C) Photoshop

D) Slack

Answer: B

11.

Databricks uses which notebook environment mostly?

A) Jupyter only

B) Zeppelin only

C) Databricks notebooks

D) Excel

Answer: C

12.

Which of the following is used for streaming ingestion in data lakes?

A) Kafka

B) Excel

C) PHP

D) CSS

Answer: A

13.

Data Lake environments can store which of the following?

A) Videos

B) Text logs

C) Images

D) All of the above

Answer: D

14.

Which type of workload is processed by Databricks?

A) Batch

B) Streaming

C) Real-time ML

D) All of the above

Answer: D

15.

What is the role of Unity Catalog in a Data Lakehouse architecture?

A) Storage of PDFs

B) Metadata and governance

C) Rendering webpages

D) Mobile app backend

Answer: B

16.

Which storage layer is considered raw and immutable?

A) Silver

B) Gold

C) Landing/Raw

D) Computing layer

Answer: C

17.

In a Data Lakehouse, where are business key performance metrics stored?

A) Bronze layer

B) Landing zone

C) Gold layer

D) Raw JSON zone

Answer: C

18.

Which of the following is a BI tool used for analytics consumption?

A) Power BI

B) Notepad

C) Eclipse

D) Outlook

Answer: A

19.

Which of these is NOT a responsibility of governance & security?

A) Logs and metrics

B) IAM/RBAC policies

C) Schema lineage

D) HTML page design

Answer: D

20.

What is the main purpose of a Data Lake?

A) Store transactional data only

B) Store all kinds of data and enable analytics

C) Replace front-end applications

D) Only for backups

Answer: B

Top comments (0)