What is Loss Function in Deep Learning?

In mathematical optimization and decision theory, a loss or cost function (sometimes also called an error function) is a function that maps an event or values of one or more variables onto a real number intuitively representing some “cost” associated with the event.

In simple terms, the Loss function is a method of evaluating how well your algorithm is modeling your dataset. It is a mathematical function of the parameters of the machine learning algorithm.

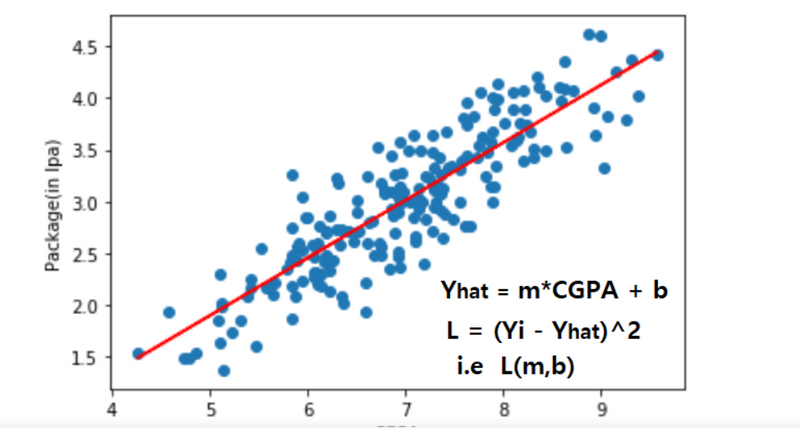

In simple linear regression, prediction is calculated using slope(m) and intercept(b). the loss function for this is the (Yi – Yihat)^2 i.e loss function is the function of slope and intercept.

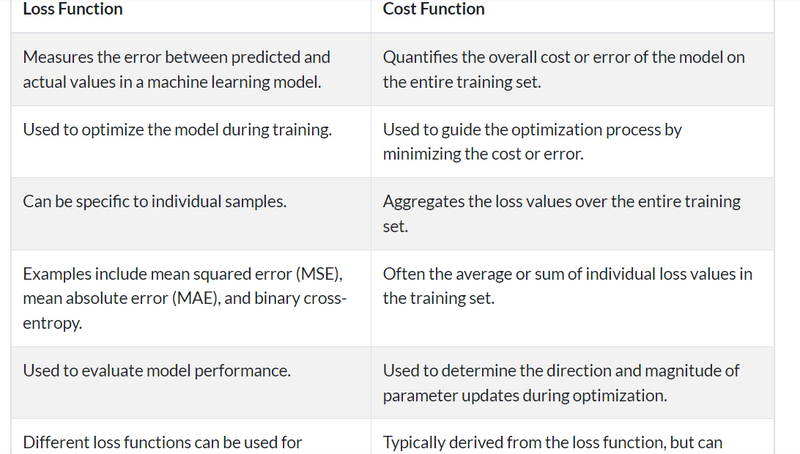

Loss Function

Why Loss Function in Deep Learning is Important?

The loss function is a method of evaluating how well your machine learning algorithm models your featured data set. In other words, loss functions are a measurement of how good your model is at predicting the expected outcome.

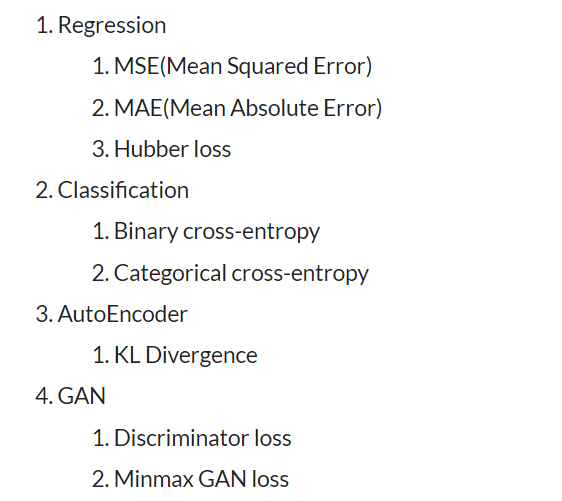

Broadly speaking, loss functions can be grouped into two major categories concerning the types of problems we come across in the real world: classification and regression. In classification problems, our task is to predict the respective probabilities of all classes the problem is dealing with. On the other hand, when it comes to regression, our task is to predict the continuous value concerning a given set of independent features to the learning algorithm.

Types of Loss function

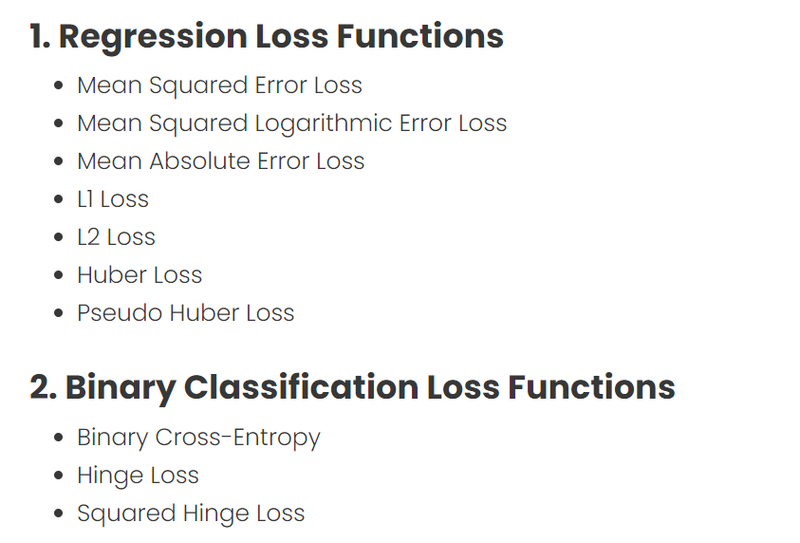

Regression Loss

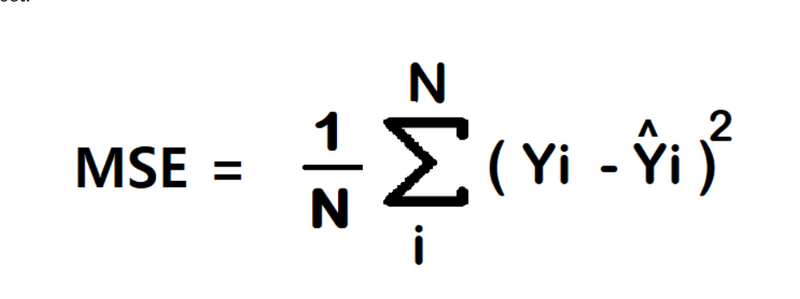

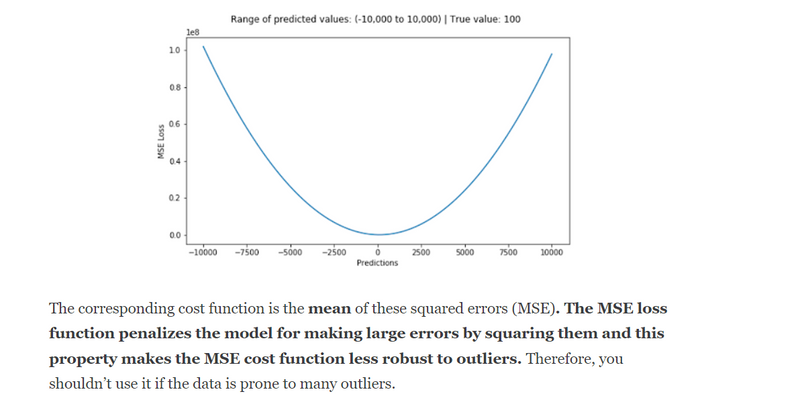

- Mean Squared Error/Squared loss/ L2 loss The Mean Squared Error (MSE) is the simplest and most common loss function. To calculate the MSE, you take the difference between the actual value and model prediction, square it, and average it across the whole dataset.

Advantage

- Easy to interpret.

- Always differential because of the square.

- Only one local minima. Disadvantage

- Error unit in the square. because the unit in the square is not understood properly.

- Not robust to outlier

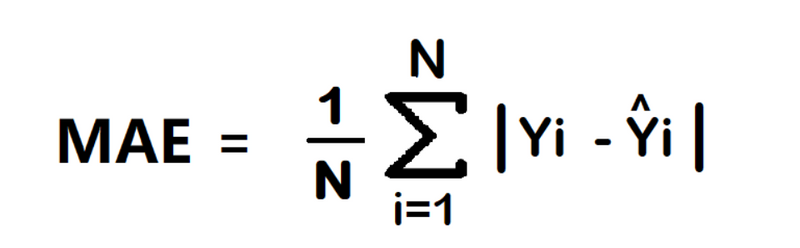

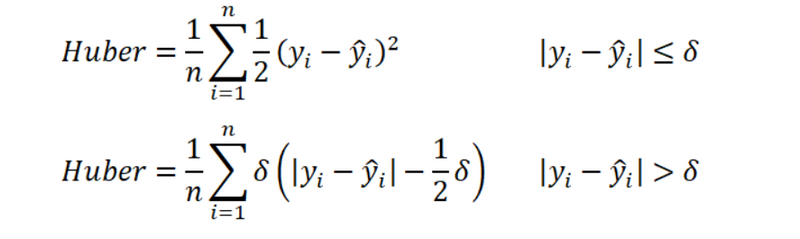

Mean Absolute Error/ L1 loss

The Mean Absolute Error (MAE) is also the simplest loss function. To calculate the MAE, you take the difference between the actual value and model prediction and average it across the whole dataset.

Advantage

- Intuitive and easy

- Error Unit Same as the output column.

- Robust to outlier Disadvantage

Graph, not differential. we can not use gradient descent directly, then we can subgradient calculation.

Note – In regression at the last neuron use linear activation function.Huber Loss

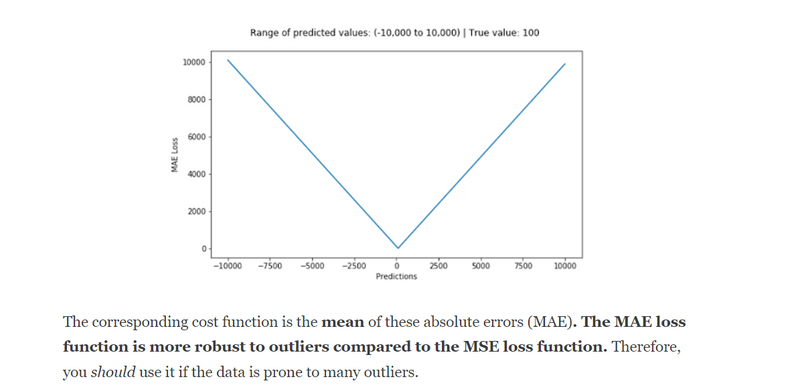

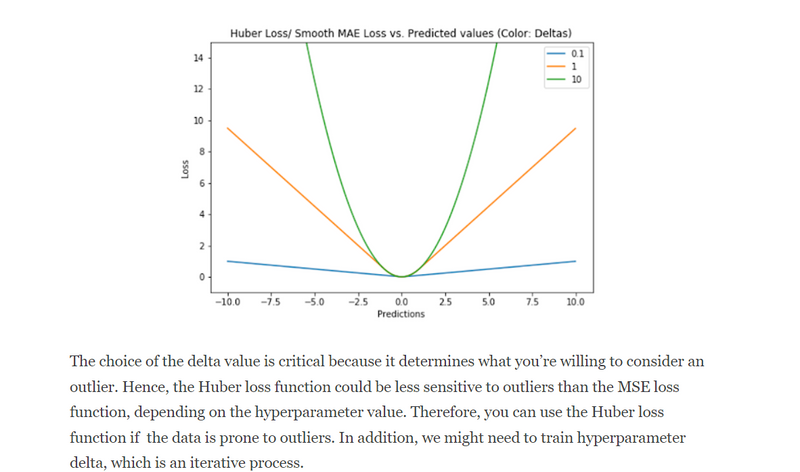

In statistics, the Huber loss is a loss function used in robust regression, that is less sensitive to outliers in data than the squared error loss.

n – the number of data points.

y – the actual value of the data point. Also known as true value.

ŷ – the predicted value of the data point. This value is returned by the model.

δ – defines the point where the Huber loss function transitions from a quadratic to linear.

Advantage

Robust to outlier

It lies between MAE and MSE.

Disadvantage

Its main disadvantage is the associated complexity. In order to maximize model accuracy, the hyperparameter δ will also need to be optimized which increases the training requirements.

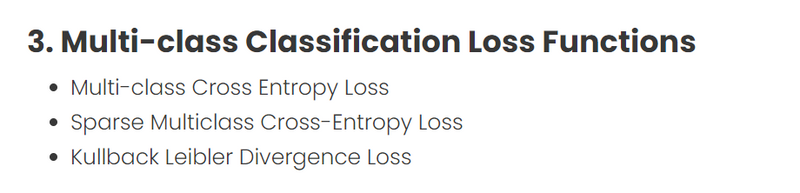

B. Classification Loss

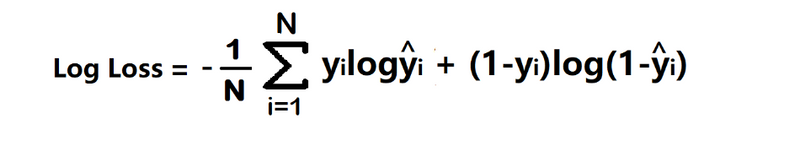

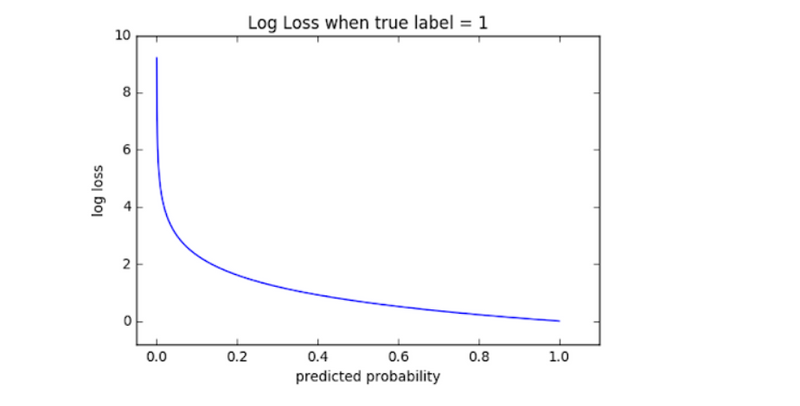

- Binary Cross Entropy/log loss It is used in binary classification problems like two classes. example a person has covid or not or my article gets popular or not.

Binary cross entropy compares each of the predicted probabilities to the actual class output which can be either 0 or 1. It then calculates the score that penalizes the probabilities based on the distance from the expected value. That means how close or far from the actual value.

yi – actual values

yihat – Neural Network prediction

Advantage –

A cost function is a differential.

Disadvantage –

Multiple local minima

Not intuitive

Note – In classification at last neuron use sigmoid activation function.

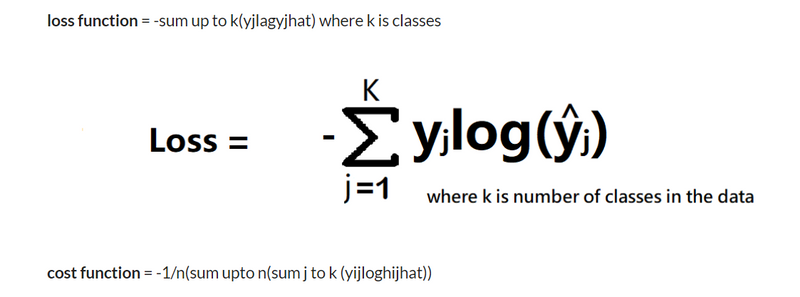

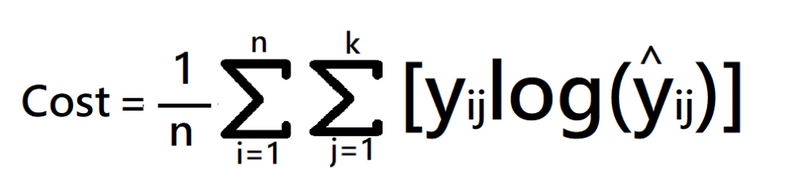

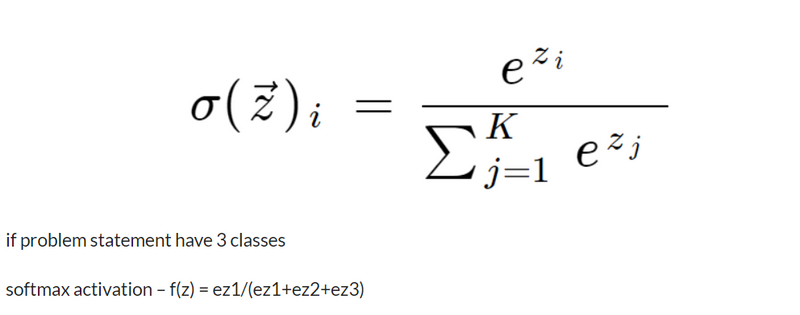

- Categorical Cross Entropy Categorical Cross entropy is used for Multiclass classification and softmax regression.

loss function = -sum up to k(yjlagyjhat) where k is classes

cost function = -1/n(sum upto n(sum j to k (yijloghijhat))

where

k is classes,

y = actual value

yhat – Neural Network prediction

Note – In multi-class classification at the last neuron use the softmax activation function

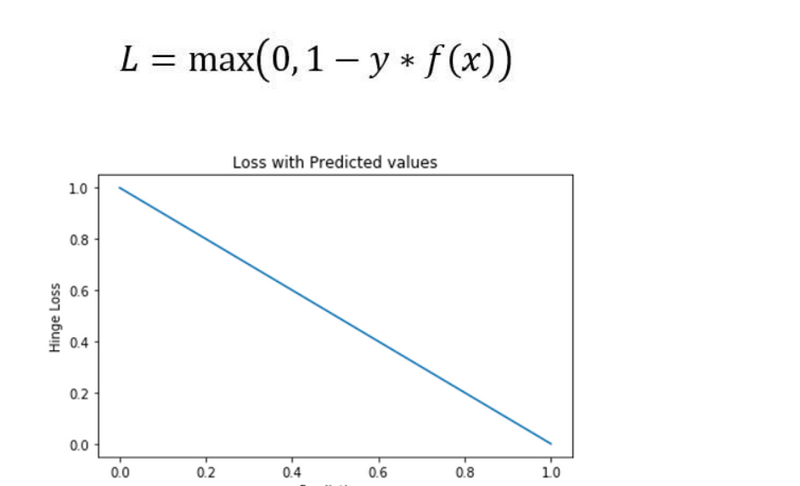

HINGE LOSS

The second most common loss function used for classification problems and an alternative to the cross-entropy loss function is hinge loss, primarily developed for support vector machine (SVM) model evaluation.

Hinge loss penalizes the wrong predictions and the right predictions that are not confident. It’s primarily used with SVM classifiers with class labels as -1 and 1. Make sure you change your malignant class labels from 0 to -1.

. What is a loss function?

A. A loss function is a mathematical function that quantifies the difference between predicted and actual values in a machine learning model. It measures the model’s performance and guides the optimization process by providing feedback on how well it fits the data.

Q2. What is loss and cost function in deep learning?

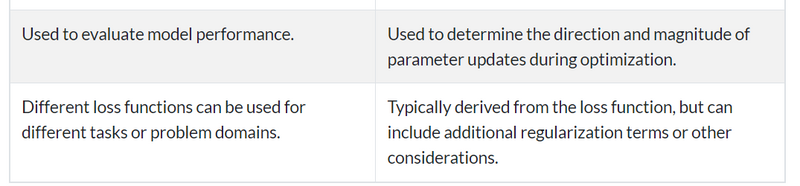

A. In deep learning, “loss function” and “cost function” are often used interchangeably. They both refer to the same concept of a function that calculates the error or discrepancy between predicted and actual values. The cost or loss function is minimized during the model’s training process to improve accuracy.

Q3. What is L1 loss function in deep learning?

A. L1 loss function, also known as the mean absolute error (MAE), is commonly used in deep learning. It calculates the absolute difference between predicted and actual values. L1 loss is robust to outliers but does not penalize larger errors as strongly as other loss functions like L2 loss.

Q4. What is loss function in deep learning for NLP?

A. In deep learning for natural language processing (NLP), various loss functions are used depending on the specific task. Common loss functions for tasks like sentiment analysis or text classification include categorical cross-entropy and binary cross-entropy, which measure the difference between predicted and true class labels for classification tasks in NLP.

Q5. How is the loss function used to train a neural network?

- Backpropagation is an algorithm that updates the weights of the neural network in order to minimize the loss function.

- Backpropagation works by first calculating the loss function for the current set of weights.

- Next, it calculates the gradient of the loss function with respect to the weights.

- Finally, it updates the weights in the direction opposite to the gradient.

- The network is trained until the loss function stops decreasing.

Top comments (0)