What is Data Scraping

?

Data scraping, commonly called web scraping, is obtaining data from a website and transferring the data into an Excel spreadsheet or other local file stored on your computer. It is one of the most effective ways of obtaining data from websites and, in certain instances, using that data on a different website.

It entails using automated programs or scripts to extract detailed data from web pages, including text, photos, tables, links, and other structured data. Data scraping enables users to gather data from several websites simultaneously, reducing the effort and time required compared to traditional data collection.

Web scraping software (commonly known as “bots”) is constructed to explore websites, scrape the relevant pages, and extract meaningful data. This software can handle large amounts of data by automating and streamlining this process.

How Does Data Scraping Work

?

The data scraping process includes the following steps:

Choose the Target Website

Decide which website or internet source will provide your needed data.

Choosing what Data to Scrape

Identify the specific data pieces or information, such as product specifications, client feedback, price data, or any other pertinent data you want to gather from the website.

Generate Scraping Code

Build scripts or programs to traverse online pages, find the needed data, and extract it using coding languages like Python, Java, or trained scraping tools. These scripts might connect with APIs or use HTML parsing techniques for obtaining data.

Scraping Code or Software Execution

Browse the target website, explore its sections, and run the website scraping code or program to retrieve the needed data. This procedure could include managing numerous website frameworks, pagination, or authentication systems.

Data cleaning and validation

To ensure the quality and utility of the data, you may need to clean, validate, and modify it after collecting it. In this step, you clean up any unnecessary or redundant information, handle missing values, and format the data into the required structure or format.

Data Storage or Analysis

: When the data collected has been cleaned and verified, it can be saved to a database or a spreadsheet or processed further for visualization, analysis, or interaction with other systems.

Also Read: Java vs. Python: Which Language Is Right For You?

Benefits of Data Scraping

Some of the benefits of data scraping include the following:

Ready to start your data science journey?

Master 23+ tools & learn with guided projects with the Certified AI & ML BlackBelt Plus Program

Improved Decision Making

Businesses can acquire current, real-time information from various websites using data scraping. Data extraction gives organizations the vital data they need to make effective decisions regarding their operations, investments, products, and services. It helps businesses make strategic choices on advertising campaigns, developing new products, etc.

Businesses can modify their goods, services, or advertising strategies by evaluating customer experiences, purchase trends, or feedback to comply with consumer demands. This consumer-centric strategy improves decision-making by integrating products with consumer requirements.

Businesses can maintain competitiveness by using data scraping to comprehend market dynamics and determine prices.

Cost Savings

Data extraction by hand requires extensive staff and sizable resources because it is expensive. Web scraping has, however, addressed this issue similarly to how numerous other online techniques have.

The various services available on the marketplace achieve this while being cost-effective and budget-friendly. However, it all depends upon the data volume required, the extraction techniques’ efficiency, and your goals. A web scraping API is one of the most popular online scraping techniques for cost optimization.

Data scraping may prove to be a cost-effective data collection method, particularly for individuals and small enterprises who do not have the financial resources to buy expensive data sets.

Time Savings

Data scraping dramatically decreases the time and effort needed to obtain data collected from websites by automating the data-gathering processes. It makes it possible to effortlessly retrieve information, extract it simultaneously, handle vast quantities of data, manage ongoing operations, and integrate with current workflows, eventually resulting in time savings and increased productivity.

Once a script or tool for scraping has been created, it can be used for websites or data sources that are similar to them. It saves time by avoiding making a brand-new data-gathering procedure from scratch every time.

Enhanced Productivity

When web scraping is executed effectively, it increases the productivity of the sales and marketing departments. The marketing group can use relevant web scraping data to understand how a product works. The marketing team can create novel, enhanced marketing plans that meet consumer demands.

The teams may create targeted strategies and gain better insights using data gathered from web scraping. Additionally, the data collected positively influences how marketing tactics are implemented into execution. The sales staff can also determine which target audience group is likely to earn a profit and from where income grows. After that, the sales staff can closely monitor the sale to maximize profits.

Competitive Advantage

Web scraping can be an excellent approach to getting the information you require for competitor research. Data scraping might allow you to organize and represent relevant and useful data while assisting you in quickly gathering competitive data.

Data scraping may benefit you in gathering data on competitors, such as:

- URLs of Competitors’ Websites

- Contact Details

- Social Networking Accounts and Followers

- Advertising and Competitive Prices

- Comparing Products and Services The data can be easily exported into.csv files once it has been gathered. Data visualization software can help you discuss what you discover with other organization members.

Why Scrape Website Data

?

Using data scraping, you can gather specific items from many websites, including product specifications, cost particulars, client feedback, current events, and any additional relevant data. This accessibility to various sources offers insightful data and expertise that may be used for several goals.

Businesses may discover new consumers and create leads by scraping data from websites. Businesses can create focused marketing campaigns and reach out to potential customers by using contact information that includes email addresses or mobile numbers from appropriate websites or databases. Website data scraping makes it easier to compile data by obtaining data from several websites and organizing it on a single platform or database.

Data Scraping Tools

The tools and techniques generally used for data scraping are as follows:

Web Scraping Tools and Software

Web scraper software can be used to manually or automatically explore novel data. They retrieve the most recent or new data, store them, and make them accessible. These tools benefit any individual seeking to gather data from a website. Here are some of the well-known data scraping tools and software:

- Mozenda is a data extraction tool that facilitates gathering data from websites. Additionally, they offer services for data visualization.

- Data Scraping Studio is a free web scraping tool for extracting data from websites, HTML, XML, and PDF documents. Only Windows users can presently access the desktop version.

- The Web Scraper API from Oxylabs is made to gather real-time accessible website information from almost every website. It is a dependable tool for fast and reliable retrieval of data.

- Diffbot is among the best data extraction tools available today. It enables you to extract products, posts, discussions, videos, or photographs from web pages using the Analyze API capability that automatically recognizes the pages.

- Octoparse serves as a user-friendly, no-code web scraping tool. It also provides cloud storage to store the information that has been extracted and helps by giving IP rotation to prevent IP addresses from being blacklisted. Scraping can be scheduled for any particular time. Additionally, it has an endless scrolling feature. CSV, Excel, and API formats are all available for download results .

Web Scraping APIs

Web scraping APIs are specialized APIs created to make web scraping tasks easier. They simplify online scraping by offering a structured, automated mechanism to access and retrieve website data. Some known web scraping APIs are as follows:

ParseHub API: ParseHub is a web scraping platform that provides an API for developers to communicate with their scraping system. With the help of the ParseHub API, users may conduct scraping projects, manage them, access the data they’ve collected, and carry out several other programmed tasks.

Apify API: Apify is an online automation and scraping service that offers developers access to its crawling and scapping features via an API. The Apify API enables users to programmatically configure proxies and demand headers, organize and execute scraping processes, retrieve scraped data, and carry out other functions.

Import.io API: Import.io is a cloud-based service for collecting data, and it provides developers with an API so they can incorporate scraping functionality into their apps. Users can create and regulate scraping tasks, obtain scraped data, and implement data integration and modification operations using the

Scraping with Programming Languages

Specific coding languages and their available libraries and software which can be used for data scraping are as follows:

Python

- BeautifulSoup: A library that makes navigating through and retrieving data from HTML and XML pages simple.

- Scrapy: A robust web scraping platform that manages challenging scraping operations, such as website crawling, pagination, and data retrieval.

Requests: A library that allows users to interface with web APIs and send HTTP requests, enabling data retrieval from API-enabled websites

.

JavaScript

Puppeteer: A Node.js library that manages headless Chrome or Chromium browsers to enable dynamic site scraping and JavaScript processing.

Cheerio: A jQuery-inspired, quick, and adaptable library for Node.js that is used to parse and work with HTML/XML documents.

Rrvest: An R package that offers web scraping tools, such as CSS selection, HTML parsing, and website data retrieval.

RSelenium: An R interface to Selenium WebDriver that allows online scraping of websites that need JavaScript rendering or interactions with users

.

PHPSimple HTML DOM: A PHP package parses HTML files and uses CSS selectors to retrieve data from them.

Goutte: A PHP online scraping package that uses the Guzzle HTTP client to present an easy-to-use interface for data scraping operations

.

JAVA

Jsoup: A Java package that parses HTML and XML documents and enables data collection using DOM or CSS selectors.

Selenium WebDriver: A Java-based structure that offers APIs for automating web page interactions that enable real-time web scraping.

RubyNokogiri: A Ruby gem that offers a user-friendly API for processing HTML and XML documents.

Watir: A Ruby library for web scraping operations that automates browser interactions

.

Best Practices for Data Scraping

There are certain things one can do for an effective and efficient data scraping process:

- Always read and follow the policies and conditions of services of the websites you are scraping.

- Scraping unnecessary sites or unnecessary data could consume and waste resources and slow down the data extraction process. Targeted scraping increases efficiency by restricting the range of data extraction.

- Employ caching techniques to save scraped data to avoid repeated scrapping locally.

- Websites occasionally modify their layout, return errors, or add CAPTCHAs to prevent scraping efforts. Implement error-handling techniques to handle these scenarios smoothly.

- Be a responsible online scraper by following every regulation and ethical rule, not overloading servers with queries, and not collecting private or sensitive data.

- Maintain a constant track of the scraping procedure to ensure it works as intended. Keep an eye out for modifications to website structure, file formats, or anti-scraping methods .

Challenges and Limitations of Data Scraping

Some of the challenges and limitations of the data scraping process are as follows:

Ethical and Legal Issues

The ethical and legal implications of data scraping can be complex. Compliance with special conditions for services or legal constraints on websites is necessary to avoid legal repercussions when extracting data. Furthermore, scraping private or confidential information without proper approval is unethical. It is fundamental to ensure that the relevant regulations and laws are followed while preserving private rights.

Frequent Updates on the Websites

Websites often modify their basic layout to keep up with the latest UI/UX developments and introduce new features. Frequent changes to the codes make it difficult for web scrapers to operate since they are specially developed about the code parts of the website at the stage of creation.

CAPTCHA

To differentiate between humans and scraping software, individuals frequently use CAPTCHA (Completely Automated Public Turing Test to Tell Computers and Humans Apart), which presents visual or logical puzzles that are simple for people to solve but challenging for scrapers. Bot developers can incorporate various CAPTCHA solutions to ensure uninterrupted scraping. While CAPTCHA-busting technology might help acquire constant data feeds, it may still cause some scraping delays.

IP Blocking

Web scrapers are frequently prevented from accessing website data by IP blocking. Most of the time, this occurs when a website notices many requests from a particular IP address. To stop the scraping operation, the website would either altogether block the IP or limit its access.

Data Quality

Although data scraping gives users access to a wealth of data, it can be challenging to guarantee the reliability and accuracy of the data. Websites may have out-of-date or erroneous information, which may affect evaluation and assessment. Appropriate data validation, cleaning, and verification methods are required to guarantee the accuracy of the scraped data.

Use Cases of Successful Data Scraping

The best-known real-world uses of data scraping are as follows:

Weather Forecasting Applications

weather forecasting

Source: Analyticsteps

Weather forecasting businesses use data scraping to gather weather information from websites, government databases, and weather APIs. They can examine previous trends, estimate meteorological conditions, and give consumers reliable forecasts by scraping the information gathered. This makes it possible for people, organizations, and emergency response agencies to make decisions and take necessary action based on weather forecasts.

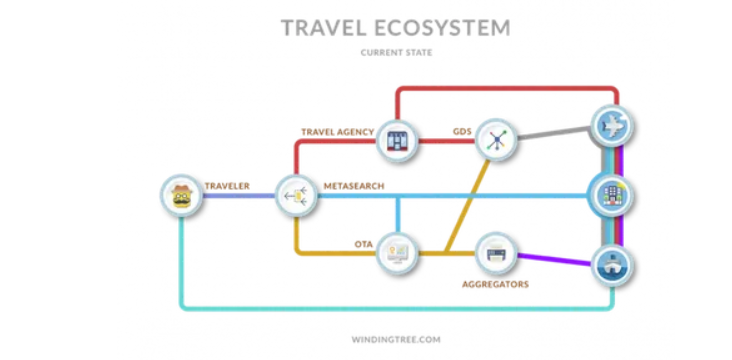

Tours and Travel Agencies

Travel brokers collect data from travel-related websites, including hotels, airlines, and car rental companies. They can provide users with thorough comparisons and guide them in locating the best offers by scraping rates, availability, and other pertinent data. Offering a single platform for obtaining data from various sources enables users to save time and effort.

Working of Data Scraping in tours and travel

Source: Datahunt

Social Media Monitoring

Businesses and companies scrape social media sites to monitor interactions, monitor brand mentions, and track consumer feedback. They can learn about consumer needs, views, and patterns by scouring social media data. This data supports establishing marketing strategies, enhancing consumer involvement, and promptly addressing consumer issues.

Social media

Source: Proxyway

Market Analysis

Financial institutions and investment organizations gather real-time financial data through data scrapings, such as share prices, market movements, and financial-related news stories. They may analyze economic conditions, discover investment possibilities, and choose wise trading options by scraping the data from multiple sources. Data scraping helps them to stay current on market trends and interact swiftly with changing industry dynamics.

Top comments (0)